We have a particular web product that we use for all of our clients. It is a pretty incredible system and has several hundred clients. We are one of their largest ones so, between our implementation, and their normal customer needs its understandable why they cannot accomodate every one of our requests right away.

That being said, there was one slight “feature” that went slightly against one of our policies. So, what to do? Well, I put on my curiously tipped white hat and go to work. One of the cool features of the system is they allow us, the admins for our group for all intensive purposes, to update portions of the page for our users. They don’t escape HTML so it turns out I’m able to inject my own JavaScript code.

Ooohhh, that’s bad. 😉 I let the company know but I proceed on.

Turns out they, being the creative bunch they are, make use of jQuery. This is definitely turning into a possibility with my toolbox already stocked and ready to go.

The particular portion of the page I choose to launch my JavaScript from is ideal because it is on the navigation bar, ensuring that it will be displayed on almost every screen. Because we can only change content for our users it ensures that my changes will only affect our users and not those of their other clients. However, with 5,000 users I had better make sure my code is well tested and clean. I still have the ability to disrupt my 5,000 users if I make a bad mistake. Point noted.

The vendor puts a character limit on the particular area of the page I’m changing. That means I have to inject JavaScript that tells the browser to load a larger script from elsewhere. No big, I put the script on our department website. Hmmm, not so good. The system is now throwing a warning that I’m loading JavaScript from a non-secure source. Hmm. Sure would be nice if I could load the script directly on my vendor’s server.

Well, I can. 🙂 The vendor also allows us to upload documents to a library that our users can download from. So, I upload the script. Uh, oh, didn’t work. Turns out they block all but a few extensions. So, instead of calling it myscript.js I change it to a text file called myscript.txt. That uploads great and, guess what, the browser is happy to execute it. Great! On my way.

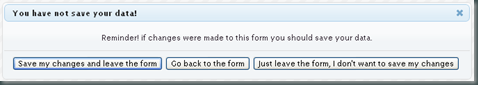

After a few tests it turns out I’m able to quite nicely make our little “issue” a thing of the past. First I check to make sure the page in question is the one being viewed. That way the bulk of the code (all 6 lines of it) runs only when necessary. Then it cleans up after itself by removing my injected code from the page DOM. Nice!

It’s not foolproof of course. If the user isn’t running JavaScript then my trick won’t work, however, half the system won’t work for them anyway since JavaScript it is required. Also, if someone is sneaky enough they can load the original source and see where I injected my code. But, if they are going to do that we have bigger problems then them simply disabling my script.

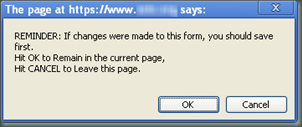

OK, that covers the techy issues, what about the people ones? Well, as you may have guessed, no matter how many times we alerted our users to the changes we made, some users started calling the vendor’s Help Desk asking what happened to the report they were used to. The vendor then called me after spending two days trying to solve the issue they weren’t seeing. They didn’t really care for the changes. They understood the necessity and gave me props for how I handled it but now their Help Desk had no idea if a problem call was because of their system or my code. Granted, this totally makes sense.

So, they gave us a choice. We can continue to develop our own “customizations” but we have to take over all Help Desk calls for our users for the entire system. I understand that, but directly supporting 5,000 users is not something we are capable of doing. I have to note also that the Help Desk is absolutely outstanding, one of the best Ihave ever worked with.

So, after the vendor agreed to look into implementing our change we are allowed to keep the code and support the Help Desk for any questions regarding this one issue. Once the vendor has solved the problem we will remove it and go on our way.

So, here is the moral of the story: Hacking your vendor’s site open doors to lots and lots of capabilities, however, it may aggravate your vendor a little or, quite possibly, cause an early termination to your contract. I suggest you keep this little tool in your developer’s pocket for extreme cases.

It was fun and our vendor is great. Once I put the change in place we started to come up with lists of additional “nice to have” features I could implement, but, in the end, we’ll just hand those over to our vendor and see if they make it in someday.